Predict what future queries will need. Eviction - similar to an online coreset problem Compaction - eviction but with very large chunks Attention matching / STILL – inducing points

Supervised vs RL approach

LoRA 16, Static queries 64

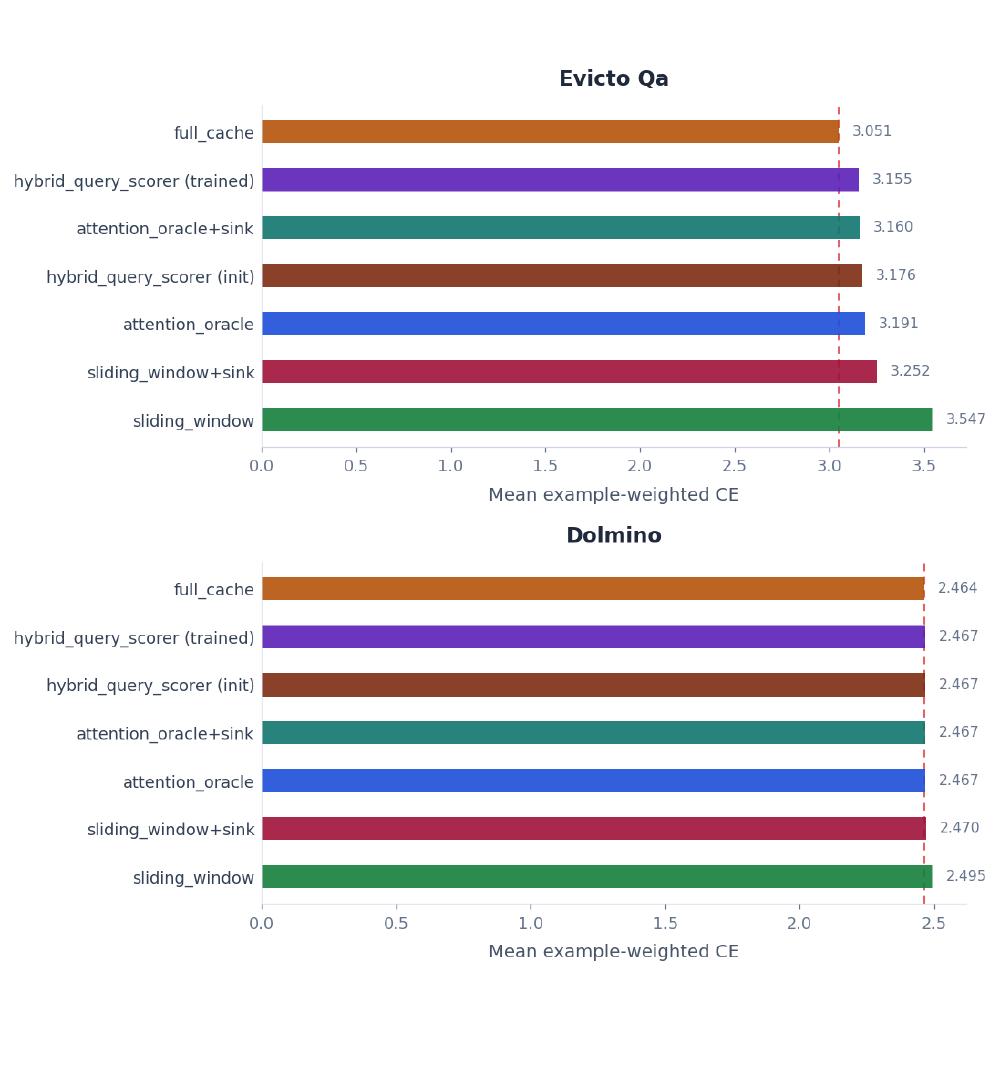

- Cache size: 512

- Chunk size: 16

- Eviction layers: 11, 12

Attention sinks are a result of the positional encodings. If we remove RoPE perhaps we won’t need the protected chunks for attention sinks.